Validation & Audit

Validation Snapshot

The repository includes runnable benchmarks and research previews with different evidence boundaries.

| Benchmark / Preview | What it shows | Evidence boundary |

|---|---|---|

| Information-loss-guided subcatchment partition | QGIS-to-Agentic SWMM preprocessing using entropy and fuzzy-similarity concepts. | GIS preprocessing concept, not a calibrated SWMM performance claim |

| Raw GeoPackage-to-INP benchmark | Public TUFLOW GeoPackage layers converted into SWMM-ready artifacts, QA, and audit | Structured raw GIS path, not arbitrary CAD/GIS recognition |

| Prepared-input SWMM benchmark | External 40-subcatchment Tecnopolo model execution, plotting, and direct swmm5 comparison |

Prepared INP validation path |

| Prior Monte Carlo uncertainty smoke | Tecnopolo HORTON parameter perturbation and hydrograph envelope preview | Prior uncertainty smoke, not calibration |

| Optional INP-derived raw adapter benchmark | Raw-like inputs extracted from a public SWMM fixture and rebuilt through the modular path | Adapter handoff check, not greenfield watershed generation |

Audit and Research Memory

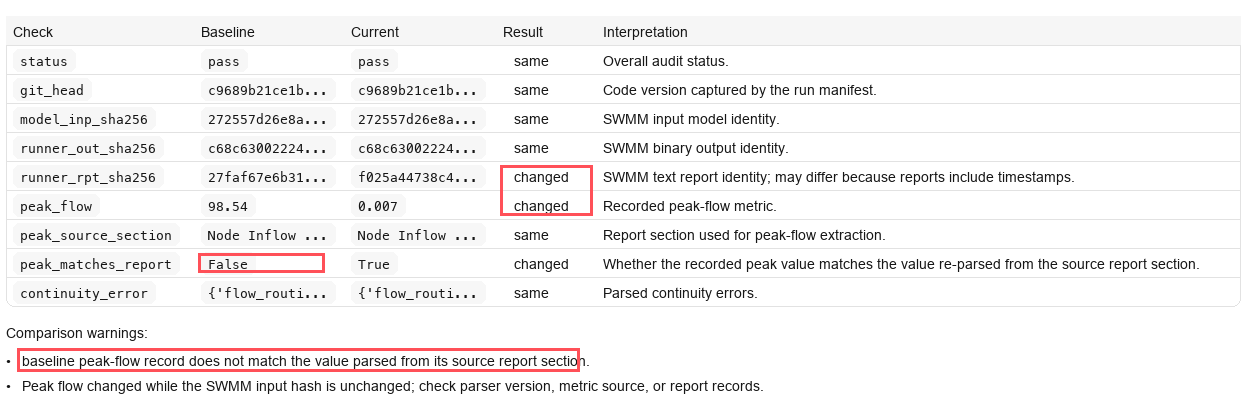

The audit layer consolidates artifacts, QA checks, and metric provenance into an Obsidian-compatible experiment note. This example catches a recorded peak-flow value that does not match the value re-parsed from the SWMM report source section.

The downstream modelling-memory layer can summarize audited run histories into recurring failure patterns, assumptions, missing evidence, QA issues, lessons learned, and controlled proposals for updating existing skills or creating new skills. Because skills drive the workflow, these proposals stay coupled to the current Agentic SWMM framework and still require human review and benchmark verification before acceptance.